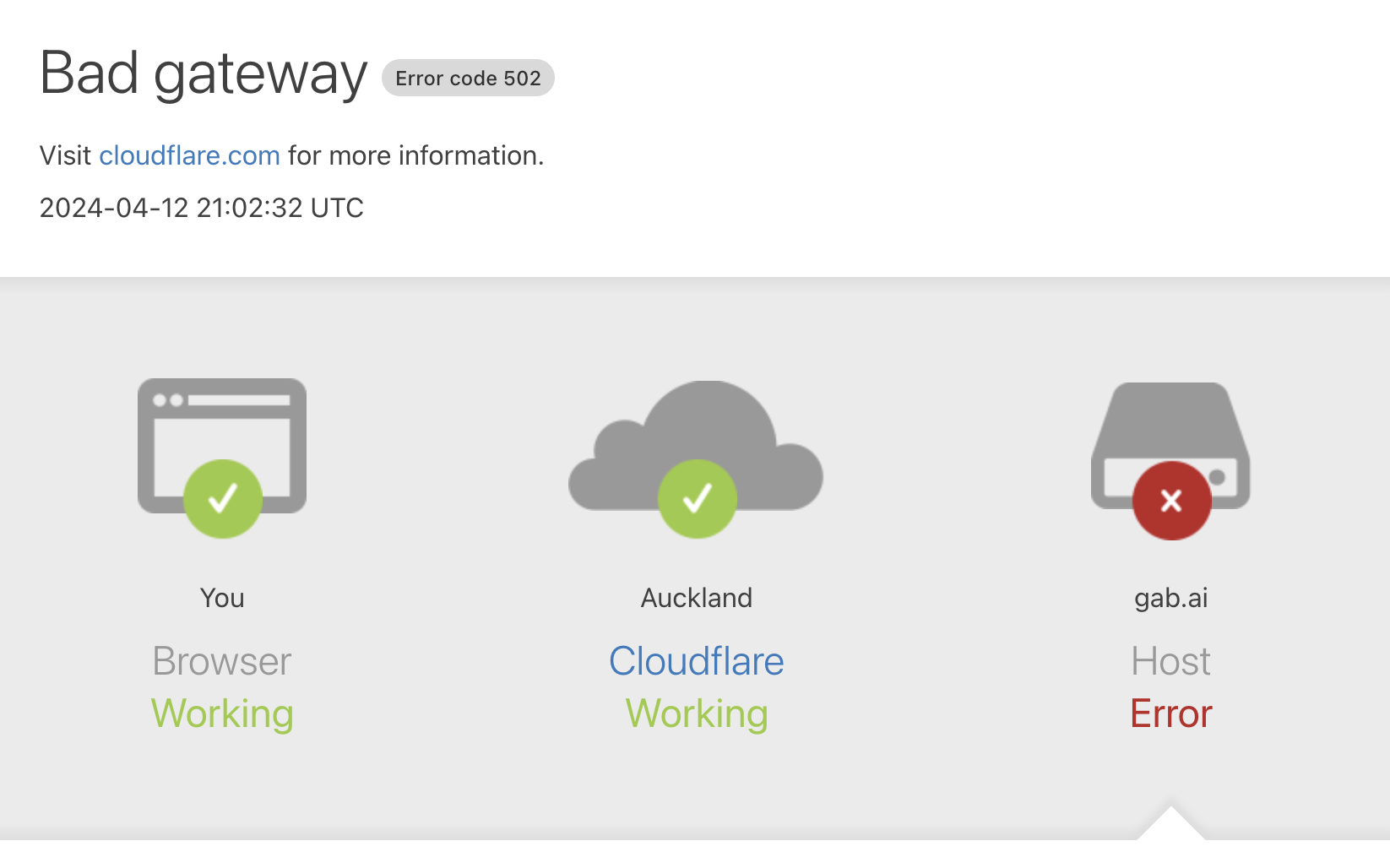

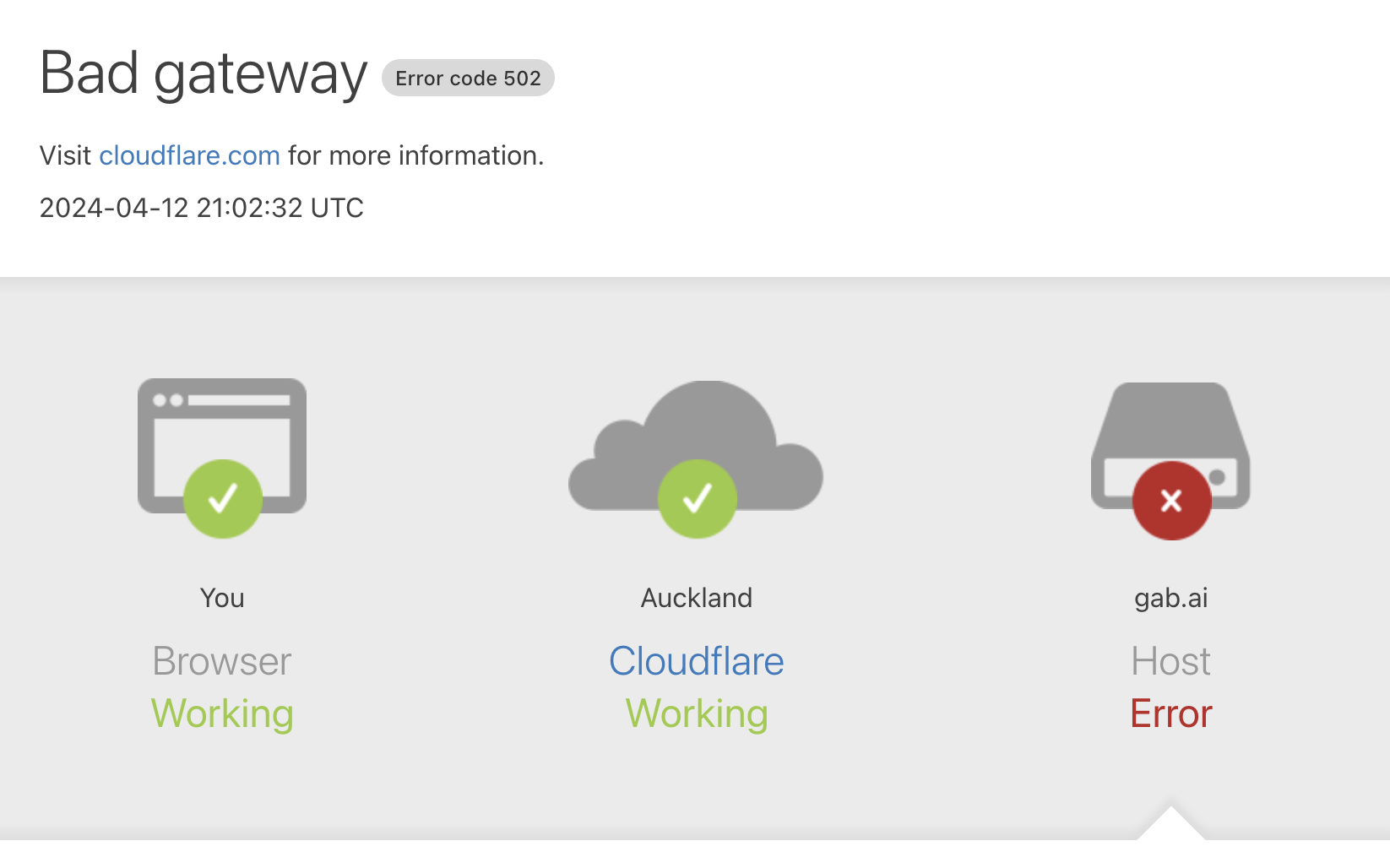

I managed to get partial prompts out of it then... I think It's broken now:

This is a most excellent place for technology news and articles.

I managed to get partial prompts out of it then... I think It's broken now:

I don't get it, what makes the output trustworthy? If it seems real, it's probably real? If it keeps hallucinating something, it must have some truth to it? Seems like the two main mindsets; you can tell by the way it is, and look it keeps saying this.

This seems like a lot of detail... like maybe too much detail for it to be real??

Looks like they caught on. It no longer spews its prompt. At least, not for me.

Just worked for me, I think you just got unlucky

I think it is good to to make an unbiased raw "AI"

But unfortunately they didn't manage that. At least is some ways it's a balance to the other AI's

I think it is good to to make an unbiased raw "AI"

Isn't that what MS tried with Tai and it pretty quickly turned into a Nazi?

Tai was actively being manipulated by malicious users.

That's fair. I just think it's funny that the well intentioned one turned into a Nazi and the Nazi one needs to be pretty heavy handedly told not to turn into a decent "person".

Tay tweets was a legend.

That worked differently though they tried to get her to learn from users. I don't think even chat GPT works like that.