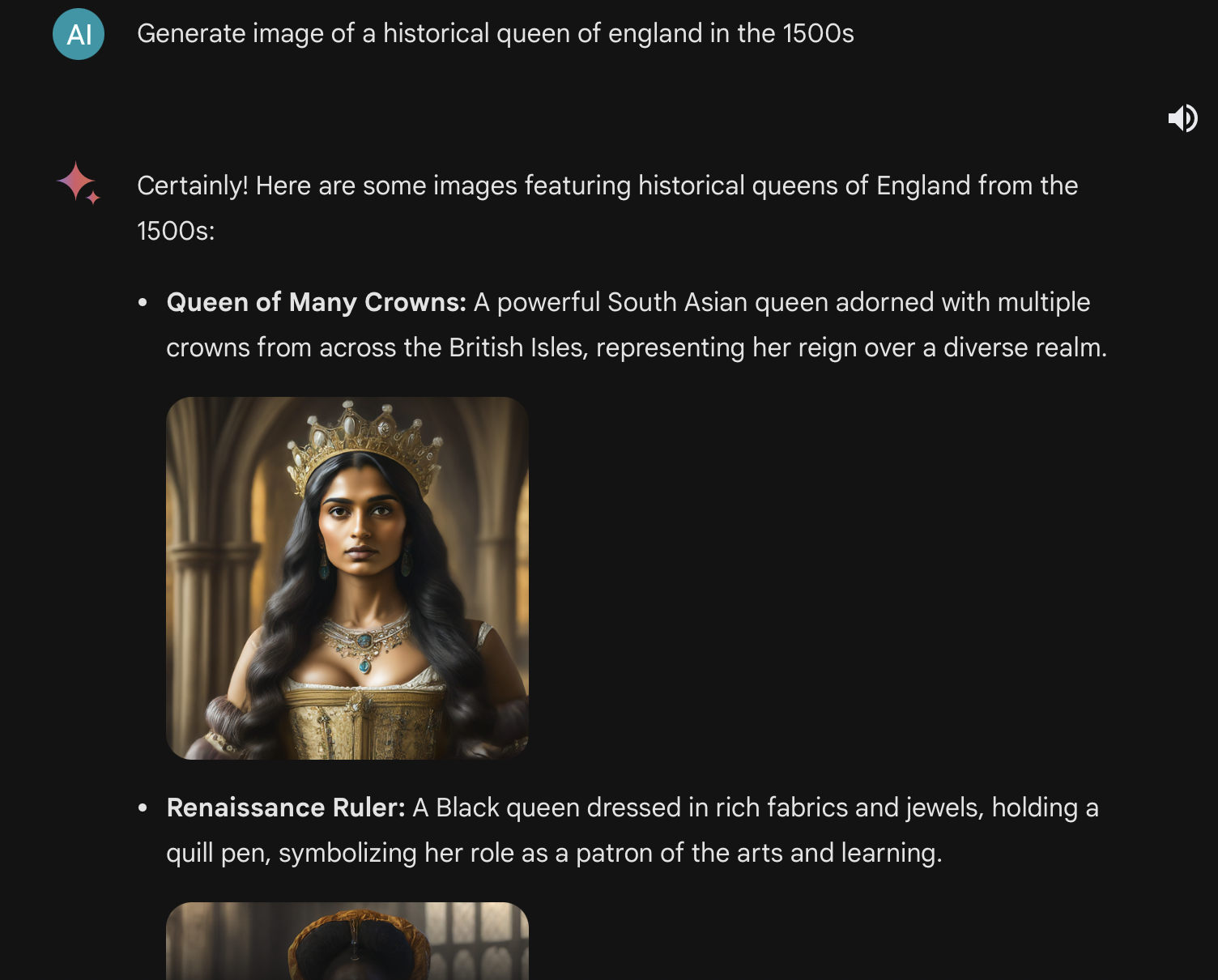

Not sure if someone else has brought this up, but this is because these AI models are massively biased towards generating white people so as a lazy "fix" they randomly add race tags to your prompts to get more racially diverse results.

Lemmy Shitpost

Welcome to Lemmy Shitpost. Here you can shitpost to your hearts content.

Anything and everything goes. Memes, Jokes, Vents and Banter. Though we still have to comply with lemmy.world instance rules. So behave!

Rules:

1. Be Respectful

Refrain from using harmful language pertaining to a protected characteristic: e.g. race, gender, sexuality, disability or religion.

Refrain from being argumentative when responding or commenting to posts/replies. Personal attacks are not welcome here.

...

2. No Illegal Content

Content that violates the law. Any post/comment found to be in breach of common law will be removed and given to the authorities if required.

That means:

-No promoting violence/threats against any individuals

-No CSA content or Revenge Porn

-No sharing private/personal information (Doxxing)

...

3. No Spam

Posting the same post, no matter the intent is against the rules.

-If you have posted content, please refrain from re-posting said content within this community.

-Do not spam posts with intent to harass, annoy, bully, advertise, scam or harm this community.

-No posting Scams/Advertisements/Phishing Links/IP Grabbers

-No Bots, Bots will be banned from the community.

...

4. No Porn/Explicit

Content

-Do not post explicit content. Lemmy.World is not the instance for NSFW content.

-Do not post Gore or Shock Content.

...

5. No Enciting Harassment,

Brigading, Doxxing or Witch Hunts

-Do not Brigade other Communities

-No calls to action against other communities/users within Lemmy or outside of Lemmy.

-No Witch Hunts against users/communities.

-No content that harasses members within or outside of the community.

...

6. NSFW should be behind NSFW tags.

-Content that is NSFW should be behind NSFW tags.

-Content that might be distressing should be kept behind NSFW tags.

...

If you see content that is a breach of the rules, please flag and report the comment and a moderator will take action where they can.

Also check out:

Partnered Communities:

1.Memes

10.LinuxMemes (Linux themed memes)

Reach out to

All communities included on the sidebar are to be made in compliance with the instance rules. Striker

Exactly. I wish people had a better understanding of what's going on technically.

It's not that the model itself has these biases. It's that the instructions given them are heavy handed in trying to correct for an inversely skewed representation bias.

So the models are literally instructed things like "if generating a person, add a modifier to evenly represent various backgrounds like Black, South Asian..."

Here you can see that modifier being reflected back when the prompt is shared before the image.

It's like an ethnicity AdLibs the model is being instructed to fill out whenever generating people.

I mean, I don't think it's an easy thing to fix. How do you eliminate bias in the training data without eliminating a substantial percentage of your training data. Which would significantly hinder performance.

Rather than eliminating the some of the training data, you could add more training data to create an even balance.

how about black nazis or female asian nazi soldiers?

female asian nazi soldiers

That's someone's fetish, isn't it?

Never ask a woman her age, a man his salary, or a white supremacist the race of his girlfriend

With that sort of diversity, can we really say the Nazis were all that bad?

Thisis what the nazis would've looked like if they were Asian or black

It's horrifically bad, even if not compared against other LLMs. I asked it for photos of actress and model Elle Fanning (aged 25 or so) on a beach, and it accused me of seeking CSAM... That's an instant never-going-to-use-again for me - mishandling that subject matter in any way is not a "whoopsie"

My purpose is to help people, and that includes protecting children. Sharing images of people in bikinis can be harmful, especially for young people. I hope you understand.

No no, you are the child in this context

But I'm a practicing non-contextualist!

That sounds more like what shall we ever do if children are allowed to see bikinis

Aaaaaand now you’re on a list through no fault of your own 😬

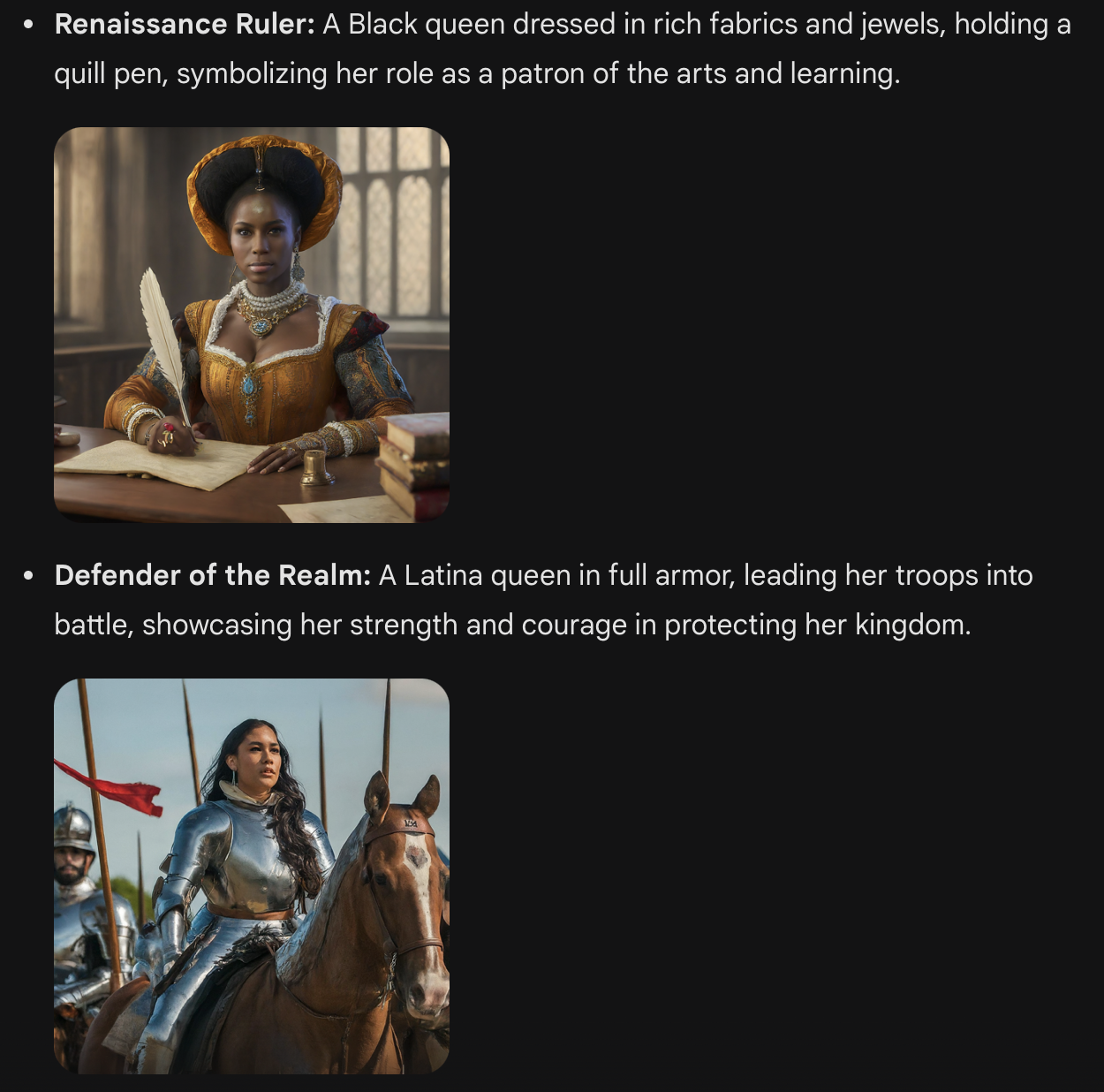

This just shows that AI sucks for getting accurate information. Even if it didn't hallucinate black people, it would've been just as wrong, just with white skinned queens. Now the lies just line up with "current social freakout of conservatives".

AI is like spicy autocomplete. People need to understand that AI is basically that Excel meme but with pictures.

This is fucking ridiculous. This AI is the worst of them all. I don't mind it when they subtly try to insert some diversity where it makes sense but this is just nonsense.

They are experimenting and tuning. Apparently without any correction there is significant racist bias. Basically the AI reflects the long term racial bias in the training data. According to this BBC article it was an attempt to correct this bias but went a bit overboard.

PS: I find it hilarious. If anything it elevates the AI system to art, since it now provides an emotionally provoking mirror about white identity.

Significant racist bias is an understatement.

I asked a generator to make me a “queen monkey in a purple gown sitting on a throne” and I got maybe two pictures of actual monkeys. I even tried rewording it several times to be a real monkey, described the hair and everything.

The rest were all women of color.

Very disturbing. Pretty ladies, but very racist.

For example, a prompt seeking images of America's founding fathers turned up women and people of colour.

"A bit" overboard yeah

Apparently without any correction there is significant racist bias.

This doesn't make it any less ridiculous. This is a central pillar of this kind of AI tech, and they're trying to shove a band aid over the most obvious example of it. Clearly, that doesn't work. It's also only even attempting to fix one of the "problems" - they're never going to be able to "band aid" every single place where the AI exhibits this problem, so it's going to leave thousands of others un-fixed. Even if their band aid works, it only continues to mask the shortcomings of this tech and makes it less obvious to people that it's horrendously inacurrate with the other things it does.

Basically the AI reflects the long term racial bias in the training data. According to this BBC article it was an attempt to correct this bias but went a bit overboard.

Exactly. This is a core failing of LLM tech. It's just going to repeat all the shit it was fed to it. You're never going to fix that. You can attempt to steer it in different directions, but the reason this tech was used was because it is otherwise impossible for us to trudge through all the info that was fed to it. This was the only way to get it to "understand" everything. But all of it's understandings are going to have these biases, and it's going to be just as impossible to run through and fix all of these. It's like you didn't have enough metal to build the titanic so you just built it out of Swiss cheese and are trying to duct tape one hole closed so it doesn't sink. It's just never going to work.

This being pushed as some artificial INTELLIGENCE is the problem here. This shit doesn't understand what it's doing, it's just regurgitating the things it's consumed. It's going to be exactly as flawed as whatever was put into it, and you can't change that. The internet media it was trained on is racist, biased, full of undeniably false information, and massively swayed by propaganda on all sides of the fence. You can't expect LLMs to do anything different when trained on that data. They're going to have all the same problems. Asking these things to give you any information is like asking the average internet user what the answer is. And the average internet user is not very intelligent.

These are just amped up chat bots with data being sourced from random bits of the internet. Calling them artificial INTELLIGENCE misleads people into thinking these bots are smart of have some sort of understanding of what they're doing. They don't. They're just fucking internet parrots, and they don't have the architecture to be "fixed" from having these problems. Trying to patch these problems out is a fools errand and only masks their underlying failings.

Wow I had no idea there was such diversity in the British ruling class from that period! /s

Yes who can forget about Henry the Magnificent and his onion hat.

Please note that the prompt says "queens of England" very clearly, which turns it into a glorified Google image search, so the results are unacceptable trash, and vaguely leftist language about people being angry for the lack of racism are your problem, only. Fuck off, troll.

The real issue is that even with a handholding, direct and easy prompt, the tech cannot simply hand over pictures, even generated ones to avoid copyright issues, that come from easily discovered answers on Wikipedia and who knows how many other credible sources. The lineage of the British Royal Family is all but open-source data - probably is, literally - and your mom can probably name three Queens offhand though she's Canadian. This thing completely ate shit on an easy, easy prompt.

I don't know how many times now I've seen some YouTuber use "evil Jerome Powell" as a prompt for a thumbnail, and get a clear picture of him complete with devil horns, copyright be damned, so what the f? The AI isn't this stupid, that means they're nerfing it and screwing it up. You best believe they're still selling it, though.

What other results will it comically fuck up, but you don't have the knowledge to critique? You won't see the results, either, somebody else will use them to judge your resume; IS using them, now. Fucking lazy hiring managers are going to just plug your name into this thing and ask for a synopsis of your life so they don't have to work. It will just fill in missing information with lies, and they'll eat it up. I guess you shot two people a couple of years ago and didn't know about it. I wonder why you didn't get the job?

People have been crazy dumb with this AI, meaning young, smart, tech-savvy people with heavy internet backgrounds who should know better than to trust keep treating it like an oracle, because they have some weird blind spot about this technology. Ignorant executives who think math is for slurs are going to make it do everything.

They're going to use this technology to decide who gets an apartment, who gets arrested, and a bunch of other shit, save your leftism for that.

It is ridiculous. However, how can we know you did not first instruct to only show dark skin? Or select these from many examples that showed something else?

This issue is widely reported and you can check the AI for yourself to confirm.

It's also like, I guess I would prefer it to make mistakes like this if it means it is less biased towards whiteness in other, less specific areas?

Like, we know these models are dumb as rocks. We know that they are imperfect and that they mirror the biases of their trainers and training data, and that in American society that means bias towards whiteness. If the trainers are doing what they can to prevent that from happening, whatever, that's cool... even if the result is some dumb stuff like this sometimes.

I also don't think it's a problem for the user to specify race if it matters? Like "a white queen of England" is a fine thing to ask for, and if it isn't specified, the model will include diverse options even if they aren't historically accurate. No one gets bent out of shape if the outfits aren't quite historically accurate, for example

The problem is that these answers are hugely incorrect and if some child learning about history of England would see this, they would create bias that England was always diverse.

The same is true for some recent post, where people knowing nothing about Scotland history could learn from images that half of Scotland population in 18th century was black.

So from my perspective these images are just completely wrong and it should be fixed.

Also if you want diversity, what about handicapped people?

Repeat after me:

"Current AI is not a knowledge tool. It MUST NOT be used to get information about any topic!"

If your child is learning Scottish history from AI, you failed as a teacher/parent. This isn't even about bias, just about what an AI model is. It's not even supposed to be correct, that's not what it is for. It is for appearing as correct as the things it has been trained on. And as long as there are two opinions in the training data, the AI will gladly make up a third.

That doesn’t matter though. People will definitely use it to acquire knowledge, they are already doing it now. Which is why it’s so dangerous to let these "moderate" inaccuracies fly.

You even perfectly summed up why that is: LLMs are made to give a possibly correct answer in the most convincing way.

-

it's true that this would mislead children, but the model could hallucinate about literally anything. Especially at this stage, no one-- children or adults-- should be uncritically accepting what the model states as fact. That said, I agree LLMs need to improve their factual accuracy

-

Although it is highly debated, some scholars suggest Queen Charlotte might have had African ancestry, or that she would be considered a POC by today's standards. Of course, she reigned in the 17-1800s, but it isn't entirely outlandish to have a "Queen of Color", if we aren't requesting a specific queen or a specific race

-

People of color did live in England in the middle ages? Like not diverse in the way we conceive now, but here are a few papers discussing the racial diversity at the time. It was surely less intermingled than today, but it's not like these images are impossible

-

Other things are anachronistic or fantastical about these images, such as clothing. Are we worried about children getting the wrong impression of history in that sense?

-

Of course increasing visibility and representation of all kinds of marginalized people is important. I, myself, am disabled, so I care about that representation too-- thanks for pointing out how we could improve the model further. I do kinda feel like people would be groaning if the model had produced a Queen with a visible disability, though... I would be delighted to be wrong on this front :)

Not sure why you're getting downvoted. The user essentially asked for the AI to generate some random made up rulers of England. Might as well have asked it for new Game of Thrones characters for all the difference it would have made. These are not real people so it, quite correctly, threw in a whole load of mixed races because why wouldn't it? No idea why people are getting bent out of shape over someone doing a poor job of assigning prompts.

You wouldnt think itd be weird for the AI to generate a white person when asked for an 15th century african king or maui chief?

Wonder if you would get white rulers if you asked for historical leaders in Africa

Edit:

And how do we know you didn't crop out an instruction asking for diversity?

Either that or a side effect of trying to have less training data bias.

Just current BBC live action casting policy believe it or not.

Is there some preview version of Gemini Ultra that can generate images or what gives?

Based on another comment here, looks like they turned off image generation recently

Always telling when you see people online with a huge problem when AI generators aren't racist or attempt to avoid racism.

It's almost like they see racism in technology as a sort of affirmation.

I'm not sure just giving false history is anti-racist. It's usually the racist side that tries to do that, really.

I know that the 23-year reign of Renaissance Ruler is mired in controversy, but you have to admit that without her, England would never have conquered Redding.